Normal test#

The scipy.stats.normaltest function tests the null hypothesis that a

sample comes from a normal distribution. It is based on D’Agostino and

Pearson’s [1] [2] test that combines skew and kurtosis to produce an omnibus

test of normality.

Suppose we wish to infer from measurements whether the weights of adult human

males in a medical study are not normally distributed [3]. The weights (lbs)

are recorded in the array x below.

import numpy as np

x = np.array([148, 154, 158, 160, 161, 162, 166, 170, 182, 195, 236])

The normality test scipy.stats.normaltest of [1] and [2] begins by

computing a statistic based on the sample skewness and kurtosis.

from scipy import stats

res = stats.normaltest(x)

res.statistic

np.float64(13.03426312119258)

(The test warns that our sample has too few observations to perform the test. We’ll return to this at the end of the example.) Because the normal distribution has zero skewness and zero (“excess” or “Fisher”) kurtosis, the value of this statistic tends to be low for samples drawn from a normal distribution.

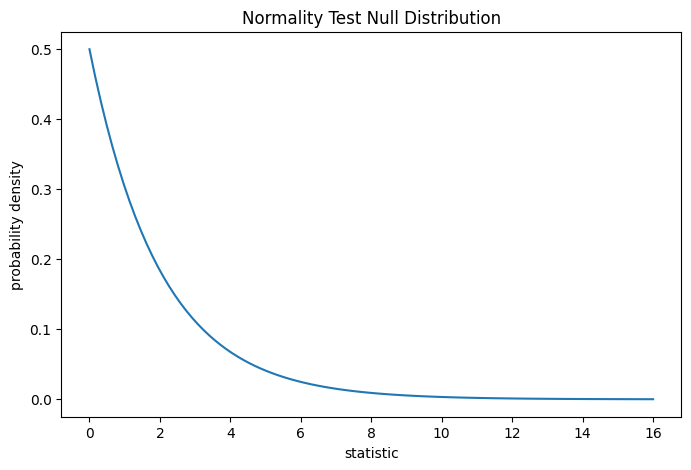

The test is performed by comparing the observed value of the statistic against the null distribution: the distribution of statistic values derived under the null hypothesis that the weights were drawn from a normal distribution. For this normality test, the null distribution for very large samples is the chi-squared distribution with two degrees of freedom.

import matplotlib.pyplot as plt

dist = stats.chi2(df=2)

stat_vals = np.linspace(0, 16, 100)

pdf = dist.pdf(stat_vals)

fig, ax = plt.subplots(figsize=(8, 5))

def plot(ax): # we'll reuse this

ax.plot(stat_vals, pdf)

ax.set_title("Normality Test Null Distribution")

ax.set_xlabel("statistic")

ax.set_ylabel("probability density")

plot(ax)

plt.show()

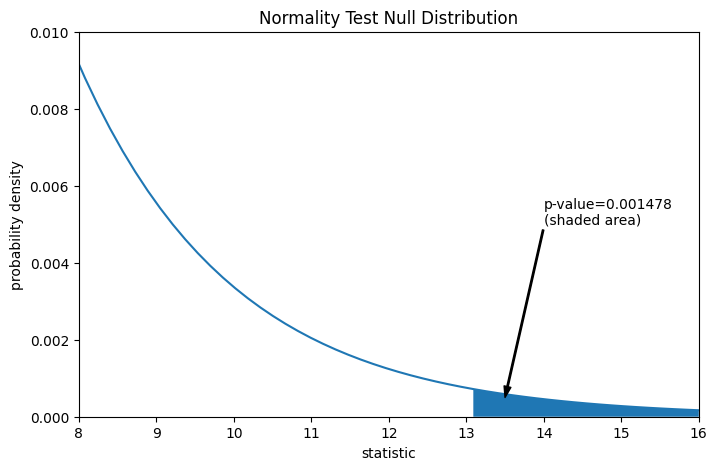

The comparison is quantified by the p-value: the proportion of values in the null distribution greater than or equal to the observed value of the statistic.

fig, ax = plt.subplots(figsize=(8, 5))

plot(ax)

pvalue = dist.sf(res.statistic)

annotation = (f'p-value={pvalue:.6f}\n(shaded area)')

props = dict(facecolor='black', width=1, headwidth=5, headlength=8)

_ = ax.annotate(annotation, (13.5, 5e-4), (14, 5e-3), arrowprops=props)

i = stat_vals >= res.statistic # index more extreme statistic values

ax.fill_between(stat_vals[i], y1=0, y2=pdf[i])

ax.set_xlim(8, 16)

ax.set_ylim(0, 0.01)

plt.show()

res.pvalue

np.float64(0.0014779023013100174)

If the p-value is “small” - that is, if there is a low probability of sampling data from a normally distributed population that produces such an extreme value of the statistic - this may be taken as evidence against the null hypothesis in favor of the alternative: the weights were not drawn from a normal distribution. Note that:

The inverse is not true; that is, the test is not used to provide evidence for the null hypothesis.

The threshold for values that will be considered “small” is a choice that should be made before the data is analyzed [4] with consideration of the risks of both false positives (incorrectly rejecting the null hypothesis) and false negatives (failure to reject a false null hypothesis).

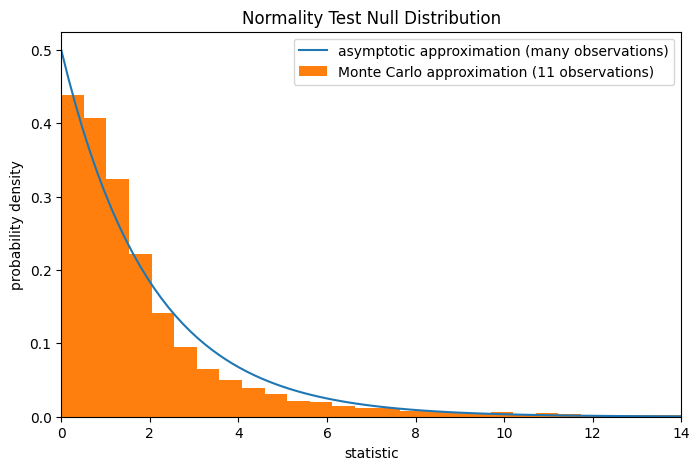

Note that the chi-squared distribution provides an asymptotic approximation of

the null distribution; it is only accurate for samples with many observations.

This is the reason we received a warning at the beginning of the example; our

sample is quite small. In this case,

scipy.stats.monte_carlo_test may provide a more accurate, albeit

stochastic, approximation of the exact p-value.

def statistic(x, axis):

# Get only the `normaltest` statistic; ignore approximate p-value

return stats.normaltest(x, axis=axis).statistic

res = stats.monte_carlo_test(x, stats.norm.rvs, statistic,

alternative='greater')

fig, ax = plt.subplots(figsize=(8, 5))

plot(ax)

ax.hist(res.null_distribution, np.linspace(0, 25, 50),

density=True)

ax.legend(['asymptotic approximation (many observations)',

'Monte Carlo approximation (11 observations)'])

ax.set_xlim(0, 14)

plt.show()

res.pvalue

np.float64(0.0084)

Furthermore, despite their stochastic nature, p-values computed in this way can be used to exactly control the rate of false rejections of the null hypothesis [5].