make_smoothing_spline#

- scipy.interpolate.make_smoothing_spline(x, y, w=None, lam=None, *, axis=0)[source]#

Create a smoothing B-spline satisfying the Generalized Cross Validation (GCV) criterion.

Compute the (coefficients of) smoothing cubic spline function using

lamto control the tradeoff between the amount of smoothness of the curve and its proximity to the data. In caselamis None, using the GCV criteria [1] to find it.A smoothing spline is found as a solution to the regularized weighted linear regression problem:

\[\sum\limits_{i=1}^n w_i\lvert y_i - f(x_i) \rvert^2 + \lambda\int\limits_{x_1}^{x_n} (f^{(2)}(u))^2 d u\]where \(f\) is a spline function, \(w\) is a vector of weights and \(\lambda\) is a regularization parameter.

If

lamis None, we use the GCV criteria to find an optimal regularization parameter, otherwise we solve the regularized weighted linear regression problem with given parameter. The parameter controls the tradeoff in the following way: the larger the parameter becomes, the smoother the function gets.- Parameters:

- xarray_like, shape (n,)

Abscissas. n must be at least 5.

- yarray_like, shape (n, …)

Ordinates. n must be at least 5.

- warray_like, shape (n,), optional

Vector of weights. Default is

np.ones_like(x).- lamfloat, (\(\lambda \geq 0\)), optional

Regularization parameter. If

lamis None, then it is found from the GCV criteria. Default is None.- axisint, optional

The data axis. Default is zero. The assumption is that

y.shape[axis] == n, and all other axes ofyare batching axes.

- Returns:

- func

BSplineobject An object representing a spline in the B-spline basis as a solution of the problem of smoothing splines using the GCV criteria [1] in case

lamis None, otherwise using the given parameterlam.

- func

Notes

This algorithm is a clean room reimplementation of the algorithm introduced by Woltring in FORTRAN [2]. The original version cannot be used in SciPy source code because of the license issues. The details of the reimplementation are discussed here (available only in Russian) [4].

If the vector of weights

wis None, we assume that all the points are equal in terms of weights, and vector of weights is vector of ones.Note that in weighted residual sum of squares, weights are not squared: \(\sum\limits_{i=1}^n w_i\lvert y_i - f(x_i) \rvert^2\) while in

splrepthe sum is built from the squared weights.In cases when the initial problem is ill-posed (for example, the product \(X^T W X\) where \(X\) is a design matrix is not a positive defined matrix) a ValueError is raised.

Array API Standard Support

make_smoothing_splinehas experimental support for Python Array API Standard compatible backends in addition to NumPy. Please consider testing these features by setting an environment variableSCIPY_ARRAY_API=1and providing CuPy, PyTorch, JAX, or Dask arrays as array arguments. The following combinations of backend and device (or other capability) are supported.Library

CPU

GPU

NumPy

✅

n/a

CuPy

n/a

⛔

PyTorch

✅

⛔

JAX

⚠️ no JIT

⛔

Dask

⚠️ computes graph

n/a

See Support for the array API standard for more information.

References

[1]G. Wahba, “Estimating the smoothing parameter” in Spline models for observational data, Philadelphia, Pennsylvania: Society for Industrial and Applied Mathematics, 1990, pp. 45-65. DOI:10.1137/1.9781611970128

[2]H. J. Woltring, A Fortran package for generalized, cross-validatory spline smoothing and differentiation, Advances in Engineering Software, vol. 8, no. 2, pp. 104-113, 1986. DOI:10.1016/0141-1195(86)90098-7

[3]T. Hastie, J. Friedman, and R. Tisbshirani, “Smoothing Splines” in The elements of Statistical Learning: Data Mining, Inference, and prediction, New York: Springer, 2017, pp. 241-249. DOI:10.1007/978-0-387-84858-7

[4]E. Zemlyanoy, “Generalized cross-validation smoothing splines”, BSc thesis, 2022. https://www.hse.ru/ba/am/students/diplomas/620910604 (in Russian)

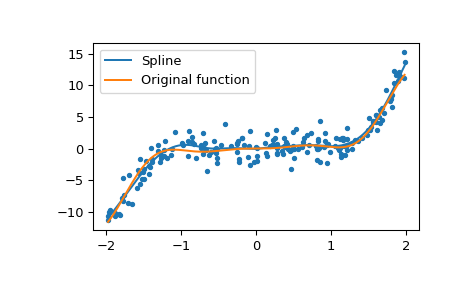

Examples

Generate some noisy data

>>> import numpy as np >>> np.random.seed(1234) >>> n = 200 >>> def func(x): ... return x**3 + x**2 * np.sin(4 * x) >>> x = np.sort(np.random.random_sample(n) * 4 - 2) >>> y = func(x) + np.random.normal(scale=1.5, size=n)

Make a smoothing spline function

>>> from scipy.interpolate import make_smoothing_spline >>> spl = make_smoothing_spline(x, y)

Plot both

>>> import matplotlib.pyplot as plt >>> grid = np.linspace(x[0], x[-1], 400) >>> plt.plot(x, y, '.') >>> plt.plot(grid, spl(grid), label='Spline') >>> plt.plot(grid, func(grid), label='Original function') >>> plt.legend(loc='best') >>> plt.show()